![]()

Multimodal Composition

Multimodality is a central focus of computers and composition scholarship. Recent volumes of Computers and Composition, Computers and Composition Online, and Kairos, for example, offer examples of assignments that require students to create multimodal compositions (Adams, 2014; Briggs, 2014; Klein, 2014), argue for the value of multimodal instruction (Rankins-Robertson et al., 2014), and explore strategies for multimodal assessment (Burnett et al., 2014; Charlton, 2014). In general, our field accepts the argument that alphabetic language does not accurately describe the literacy practices of people in the internet age, and, as such, we need to re-define “writing” as multimodal (Lunsford, 2007) and reorganize schooling around the products of digital literacy (Collins & Halverson, 2009).

However, while this topic is well-researched and well-theorized, on most college campuses, there is still considerable resistance to shifting literacy instruction from print products to digital projects. Selfe (2004) points out that this resistance is in part due to the fact that college writing instructors are predisposed to “privilege alphabetic literacy … because [they] have some status as practitioners and specialists of writing” (71), but are not credentialed authorities on multimodal composition. Somewhat similarly, Graff & Duffy (2008) argue that the resistance to non-alphabetic communication stems from a view of the alphabet as the beginnings of “civilized” society; people fear that non-alphabetic literacies will cause our prosperity to decline. Both arguments point to concerns about changing values and authority structures, which is precisely why it is so important to continue defining and discussing multimodal composition.

I present a discussion of multimodal composition that summarizes existing arguments for the integration of visuals and sound into writing assignments, as well as the challenges of teaching multimodal composition, and then extends this scholarship to define multimodal composition as a characteristic of digital literacy. I also break multimodal composition into three categories—visuals, sound, and video—and enact the literacies I discuss by presenting text accompanied by images to describe the history of visuals in the composition classroom, a podcast to discuss the integration of sound into college writing instruction, and a video to consider the challenges of teaching multimodal composition.

Visuals

The incorporation of images into literacy instruction is not new—scholars have been advocating for visuals since the 1940s. However, while students were asked to use visuals as prompts for alphabetic writing or as artifacts to analyze, they were not asked to produce visual artifacts. Instead, the arguments for visuals in literacy instruction throughout most of the 20th century went something like this: young people need to critically consume information, and information is often delivered via images or television; consequently, we must account for visuals in literacy instruction. This line of reasoning not only privileges consumption over production, but also carries a caveat that words are superior to images. (George, 2002)

In 1996, the New London Group proposed we think about literacy in terms of design and urged educators to ask students to create media instead of studying it from a distance. The timing of the New London Group’s seminal article—three years after the internet became public—is no coincidence, and in the subsequent decade the digital tools that enable media production became increasingly available. Media production particularly skyrocketed when “podcasting” was coined in 2004 and YouTube was launched in 2005 (Leander & Lewis, 2008). As George (2002) puts it, both students and “teachers of English composition” suddenly “had the means to produce communication that went far beyond the word” (31).

This new capacity to produce and distribute digital media has had a profound impact on our society. As Gunther Kress famously argues, the dominance of images is causing a fundamental shift in the way we read, write, and experience creativity—we are moving away from the temporal logic of words and toward the spatial logic of images. Kress (2003) asserts that while written texts follow a linear, causal structure, where the author guides the reader to intended conclusions, visual texts are more dependent on the reader to determine the structure. In a written text, the author creates “a clear path, which [has] to be followed” and the reader’s task is to interpret and transform “that which [is] clearly there and clearly organized” (2003, 162). With visual communication, the viewer is required to order “the simultaneously present elements in relation to her or his interest” (Kress, 2005, 13).

Kress applies this logic of the visual to the way people interact with websites, which (despite the amount of written text) adhere to spatial rather than temporal logic. When viewing a website, Kress argues, the reader must apply “principles of relevance to a page which is (relatively) open in its organization, and consequently offers a range of possible reading paths, perhaps infinitely many” (2003, 162). In this way, visual communication requires the reader to take more responsibility for the meaning of the text and to view texts (visual or written) as things that need to be interpreted and synthesized with other pieces of information, rather than completed artifacts that supply all of the information up front.

With that being said, it is not as though visual texts lack authorial structure. As Wysocki (2005) argues, written texts are spatially designed and images contain temporal elements. Any technical writer or book editor will tell you that written words do not lack visual design, and any graphic designer or usability expert will argue that the author of a visual text has a clear influence on the viewer’s interpretation and meaning making. In a direct challenge to Kress, Wysocki contends that the “temporal strategies of composition are very much present even in images that we can apparently perceive all at once” (58).

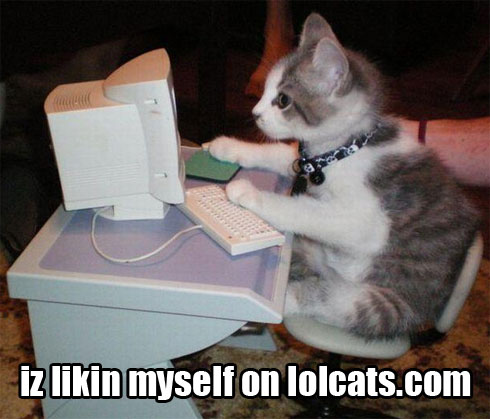

While Wysocki’s point is well taken, there is something different about the way we interact with a website than how we interact with a bound book or even a PDF. Possibly, the difference has to do with our cultural context for interpreting visual artifacts; it might be that because fewer rules (grammars) have been established for visual communication, context is more important. If I read something I don’t understand, I can look up the word in the dictionary, but if I see a LOL cat meme and don’t understand it, I have to find a member of the community to explain it to me (which generally requires my becoming a member of the community). An academic webtext such as this one is more similar to the bound book or the PDF than a meme, but the point stands that increased reader control shifts the role of context in meaning making.

Consequently, when we incorporate visuals into our writing courses with the intention of facilitating students' digital literacy acquisition, we need to ask students to not only create visuals, but also consider the ways combining visuals with text influences (and is influenced by) notions of authorship and community dynamics.

Sound

In addition to visuals, sound is an important aspect of digital literacy, but it has not received as much attention in the literature. To explore this concept in more depth, and to demonstrate the differences in reading and listening, I've created an audio podcast that describes (1) asynchronous versus synchronous communication, (2) the history of sound in writing classrooms, and (3) an example from the literature (Comstock & Hocks, 2006) of asking students to integrate sound into compositions. Please click the play button to listen.

Video

Throughout this page, I've argued that sound and visuals are key elements of digital literacy in the internet age, but are not replacements for alphabetic literacy. Instead, digitally literate people are able to choose between and combine multiple modes to communicate effectively. One of the most popular ways to facilitate such combinations is to require students to create videos. Consequently, I created the video below to offer an example and to discuss some of the challenges of multimodal composition, namely meeting audience needs and expectations while also creating a composition that is distinct from a mono-modal version. Please click the play button to watch.

Teaching Multimodal Composition

Thinking about multimodal composition as a characteristic of digital literacy has several pedagogical applications.

First, for digital literacy to be a learning outcome in composition courses, students need to produce digital compositions. The production of digital media is necessarily multimodal because composers must choose from a variety of modalities and often combine modes. There is a plethora of literature with specific examples and strategies for incorporating such assignments into college writing classrooms (to begin, I recommend: Bowen & Whithaus, 2013; Briggs, 2014; Selfe & Selfe, 2008).

Second, composition courses should address sound. The inclusion of sound as a compositional mode complicates our notion of literacy because it blurs the lines between asynchronous and synchronous communication. Traditionally, synchronous exchanges are both verbal and face-to-face, while asynchronous exchanges are written. Digital tools allow us to create asynchronous verbal communications and synchronous written communications, thus productively challenging our notions of “talking” and “writing.” Instructors can ask students to engage in synchronous text chats and asynchronous audio/video discussions (i.e., post a podcast to an asynchronous discussion forum) that discuss these ideas.

Third, assessing multimodal composition is not the same as assessing print composition because multimodal compositions are not necessarily linear and may not use textual grammars. As evidenced in the recent Computers and Composition special edition on multimodal assessment (volume 31), the issue of evaluation is an important part of the conversation in our field right now. As I argue in the conclusion to this text, an application of thinking about digital literacy as a learning outcome is that the three characteristics of digital literacy (multimodal composition, information, and collaboration) can become criterion on rubrics, measuring the extent to which students’ multimodal compositions demonstrate digital literacy skills.

Importantly, multimodal composition is only one of three characteristics of digital literacy. This point is particularly important when we think about learning outcomes because there are very different pedagogical strategies if the end-goal of a composition course is to teach multimodal composition versus a course that aims to teach digital literacy. As I argue in following pages of this webtext, extending our focus beyond multimodal composition to also include information and collaboration more robustly accounts for the social context of the Internet Age and thus enables our students to acquire more enduring literacy skills.

For more information about literacy instruction, see History of Literacy Instruction and Teaching Digital Literacy. For more information about the relationship between literacy and digital tools, see The Problem of Tool Use.

Created by Mary K. Stewart (2014)