Data Analysis Method & Themes

On this page, I provide a glimpse into my analysis process, particularly for open-ended questions, grouping my discussion around the three main categories of findings the webtext is constructed around—implementations, motivations, and multiliteracies. I have chosen to take screenshots of limited sections of the analysis because I do not want to risk making connections that may inhibit respondent anonymity.

For much of the data analysis, I used the tools afforded by Survey Monkey, which allowed for some sophisticated sorting of the data. However, I also used my own rudimentary process of sorting and coding data in Excel, developing and re-sorting categories as I went through the analysis process, making connections across questions. Manually entering much of this information in to Excel allowed me to see trends and ask new questions of the data.

For much of the data analysis, I used the tools afforded by Survey Monkey, which allowed for some sophisticated sorting of the data. However, I also used my own rudimentary process of sorting and coding data in Excel, developing and re-sorting categories as I went through the analysis process, making connections across questions. Manually entering much of this information in to Excel allowed me to see trends and ask new questions of the data.

Implementations

One of the primary questions in this study asked the WPAs to indicate if they encourage any program-wide instantiations of digital literacy (as opposed to just the practices of an individual teacher).

Is digital literacy formally encouraged at a programmatic level in your writing program?

Yes | No | Unsure | Other

Yes | No | Unsure | Other

After answering this question, the WPAs were asked to follow up by explaining their answer. The responses to this question formed an initial basis to help me analyze WPA practices.

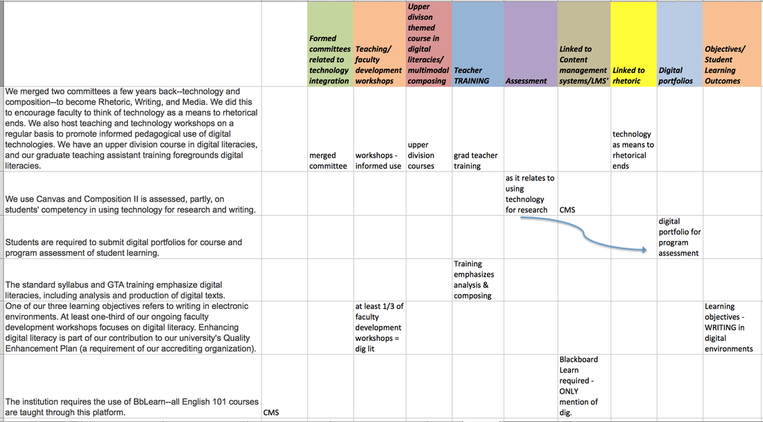

As the above image shows, I took the open-ended responses and began creating categories (in color) for each different way the WPA encourages digital literacy programmatically. I created a colored category for each different mention (for example, the second response above mentioned using Canvas (an LMS) and assessing students' competency in using technology for research. Thus, three categories were created - assessment, research, and LMS.) The initial process created over 35 colored categories (I've only included a screenshot of a small portion).

I also took notes that would help me offer more specific results in my reporting of the data. For example, for the category of faculty development workshops, if the WPA mentioned that the workshop involved training to use an LMS or training in pedagogy, I took note below the colored category so that I could later analyze just the faculty development category to determine if workshops were more often related to functional training or pedagogical.

After the initial sorting process, I began to combine categories that overlapped. For example, I combined faculty development workshops and teacher training into one training category. I then counted the number of programs that mentioned each type of digital literacy integration. This process allowed me to make claims such as the following: WPAs most often mentioned supporting digital literacy through training initiatives (mentioned in 41% of responses) and student learning outcomes or program objectives (30%). However, I was careful not to claim that other programs do not engage in these activities, as it's possible the WPAs did not mention every instantiation in their response. Yet, their immediate responses reveal valuable information about what they believe to be the primary programmatic levels of support in their programs.

After the initial sorting process, I began to combine categories that overlapped. For example, I combined faculty development workshops and teacher training into one training category. I then counted the number of programs that mentioned each type of digital literacy integration. This process allowed me to make claims such as the following: WPAs most often mentioned supporting digital literacy through training initiatives (mentioned in 41% of responses) and student learning outcomes or program objectives (30%). However, I was careful not to claim that other programs do not engage in these activities, as it's possible the WPAs did not mention every instantiation in their response. Yet, their immediate responses reveal valuable information about what they believe to be the primary programmatic levels of support in their programs.

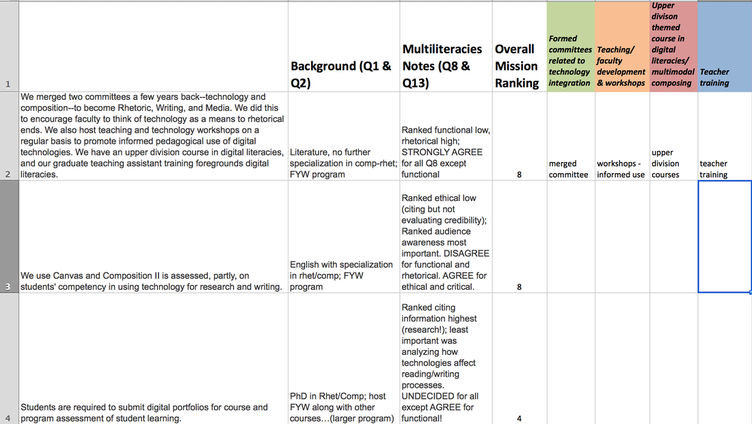

Because this was a primary question for the study, I also looked for themes across questions and tried to examine how certain responses related to others. As the screenshot below shows, in a different analysis phase, I looked at the WPA's educational background; the type of program they teach in; their ranking of digital literacy's relationship to the overall program mission (survey section 3 question 3), as well as their responses to the questions about multiliteracies, mapping those responses on to the ways in which they indicated digital literacies are implemented in their programs.

Engaging in this different level of analysis allowed me to discern a few useful trends:

- Those WPAs whose only mention of programmatic digital literacy use was in relationship to the program's Learning Management System (LMS) tended to rank critical digital literacy low, indicating that while some of these WPAs believe they are integrating digital literacy, the functional use of a tool is valued more than critical analysis or reflection on its impact on composing. The descriptions of the use of LMSs and digital portfolios across questions tended to discuss these tools mainly in relationship to serving as repositories for student work or enhancing efficiency for teachers.

- Workshops tended to be described as focusing on teaching faculty technical skills, whereas teacher training was described more often as supporting teachers as they created specific assignments.

- Almost all of the WPAs who indicated their program had Student Learning Outcomes related to digital literacy also ranked the importance of digital literacy to the program's overall mission as at least moderately important. While perhaps not surprising, this implies that WPAs who are committed to digital literacy are using SLOs as a strategy to embed digital literacy into the program. This could be useful for WPAs who may not wish to intervene at the level of individual assignments.

- Both in tracking the ways WPAs described their programs' digital literacy implementations and cross-comparing this with the ways in which they talked about their multiliteracies rankings, I found it evident that research skills were a primary reason for implementing technology in these programs.

Motivations

Another important open-ended question was related to WPAs' beliefs about the role of digital literacy in the composition curriculum.

What role should digital literacy play in the composition classroom?

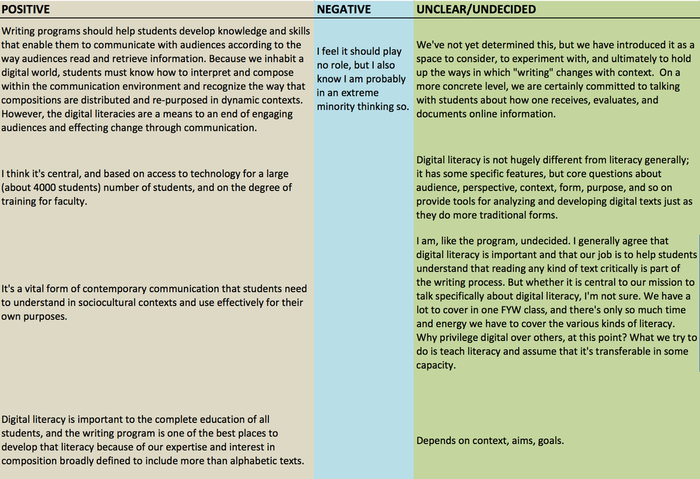

My initial approach was to sort the data by positive and negative responses. I also included an undecided/unclear category. As the chart below shows, I grouped responses accordingly. If a response indicated that the WPA felt digital literacy was important but had not been able to implement it programmatically, it was coded as positive, but the program's inability to implement digital literacy was noted in my analysis. Some responses were coded as unclear because the WPA did not make a value judgment either way (such as the second and fourth responses under "unclear" below), whereas others were coded this was because the WPA explicitly stated that he or she was still undecided.

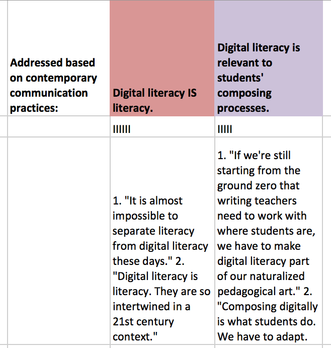

Later, once I had made general claims about WPAs' attitudes towards digital literacy as being positive or negative, I further broke down those responses. For example, I took all positive responses and put them in a spreadsheet and categorized the reasons why these WPAs indicated digital literacy was important. I followed a process similar to that I describe in section A above. As I describe on the Motivations page, the WPAs responded to this question in different ways. As an example, some justified their use of digital literacy based on the notion of keeping up with contemporary composing practices. I counted the number of responses that responded in this way, as depicted below. I kept representative quotes from the responses below each category to refresh my memory when connecting this analysis to other questions.

I also connected the motivations question to other questions.

|

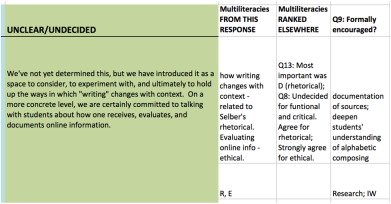

As shown to the left, this WPA's response mentioned how writing changes in a digital context, an element Selber discusses as part of rhetorical literacy; and her response mentioned evaluating online information, an element Coley emphasizes as part of ethical literacy. I therefore assigned an R (Rhetorical) and E (Ethical) code, which I used to help me connect multiliteracies values to the positive, negative, and undecided responses.

|

This part of the process helped me notice some additional themes:

- Some of the WPAs who were undecided were still committed to using technology for research. Those WPAs also tended to rank ethical digital literacy high. These trends further support the notion that digital literacies are being connected to finding and evaluating information online. This is certainly important, but as I discuss in the Implications section, there are other aspects of digital literacy to consider, as well as other aspects of ethical digital literacy to explore.

- Some of the WPAs who indicated that digital literacy is formally encouraged in their programs had responses to the question—What role should digital literacy play in the composition curriculum?— that were categorized as undecided. The most common concern seemed to be that while these WPAs encourage digital literacy in their programs, they do not want it to be a separate consideration that takes away time from students learning about alphabetic composing. This trend held true in a few of the responses to the implementation and multiliteracies questions, too.

- Many of the WPAs in this study tend to focus on program goals first and then determine how technologies can be used to meet these goals. This was perhaps most evident when looking at the undecided responses to what role digital literacy should play. Surprisingly, when contrasted with the question about programmatic implementations, most of the undecided respondents stated they do formally encourage digital literacy. Their responses, however, tended to reflect that it was more about helping students improve rhetorical literacies such as audience awareness, and technology was "just one approach among many for doing that," as one respondent indicated. In the New Literacies section of this webtext, I discuss this outcomes first, technology second perspective in more detail.

Multiliteracies

I have already discussed above some of the ways in which I mapped the multiliteracies information on to other questions, which helped provide context for the quantitative and qualitative data.